Recently I had a customer ask if it was possible to integration a VMware Tanzu Kubernetes Grid (TKG) cluster into Azure Arc. Curious myself I decided to investigate.

Azure Arc is a Microsoft service that extends Azure’s management capabilities to resources outside of the Azure environment. It allows you to manage your on-premises servers, virtual machines, and Kubernetes clusters using Azure’s management tools, policies, and features. With Azure Arc, you can leverage Azure’s management services, such as Azure Policy, Azure Monitor, and Azure Security Center, to govern and monitor your resources regardless of their location.

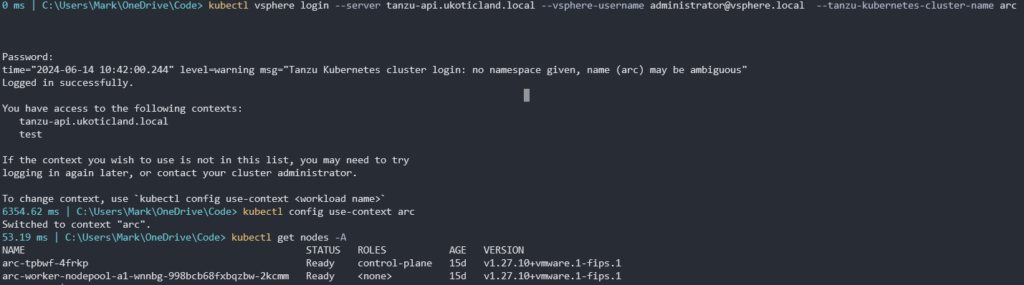

There are a few prerequisites we need to do first before we get too far into the installation / configuration of the Arc integration. Obvious, the first is having a VMware TKG workload cluster provisioned. It’s worth noting that I’m doing this on vSphere with Tanzu and I’m using a VMware TKG version running Kubernetes 1.25+. The TKG version is important to note as after v1.25, Pod Security Policy (PSP) has been replaced with Pod Security Admission (PSA). I wrote a post on this recently. It’s going to mean we need to perform a few additional steps immediately after running the Arc installation, which I’ll cover a bit further below.

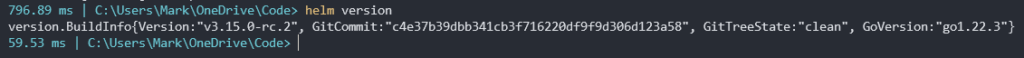

Once you have a TKG cluster up and running you need a few tools. The first is Helm. You can download the latest Helm release from GitHub. Extract the Helm file and add it to your path environment variables. Then make sure you can execute it.

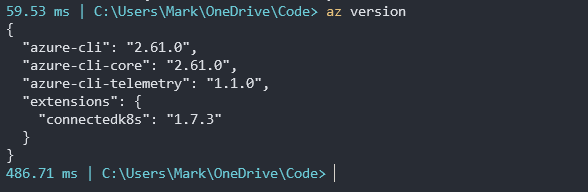

Next we need the Azure CLI. Depending on your version you can download that here and install. A quick az version will confirm if it has installed ok.

With those key tools deployed on our workstation we are ready to start the Azure Arc installation.

If you’re comfortable with Azure you might be able to do all of this from the CLI. But if you’re like me and need a little help you can start the process from the Azure portal. Now I hope it goes without saying but you’ll obviously need an Azure account with an active subscription to continue at this point.

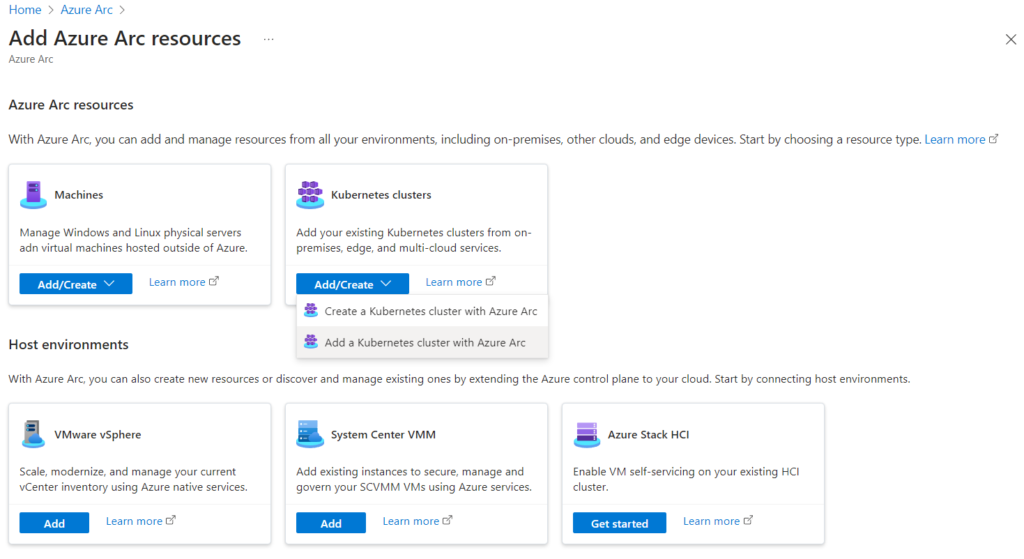

Head over to Azure Arc service and select Add resources.

Select Add/Create on the Kubernetes clusters tile and click Add a Kubernetes cluster with Azure Arc.

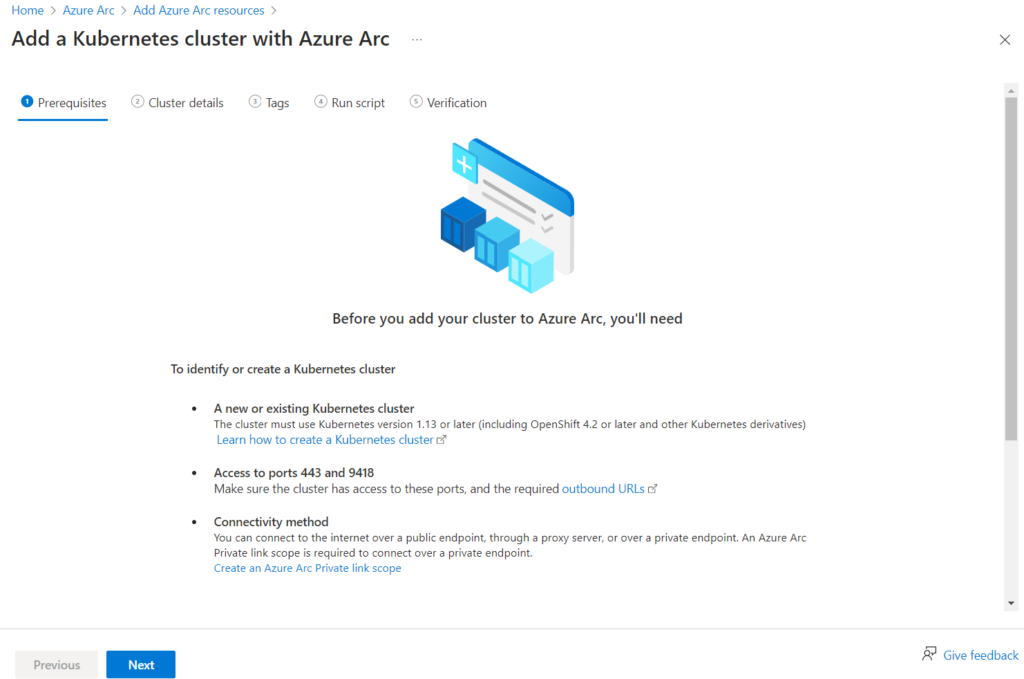

Review the Prerequisites and click Next

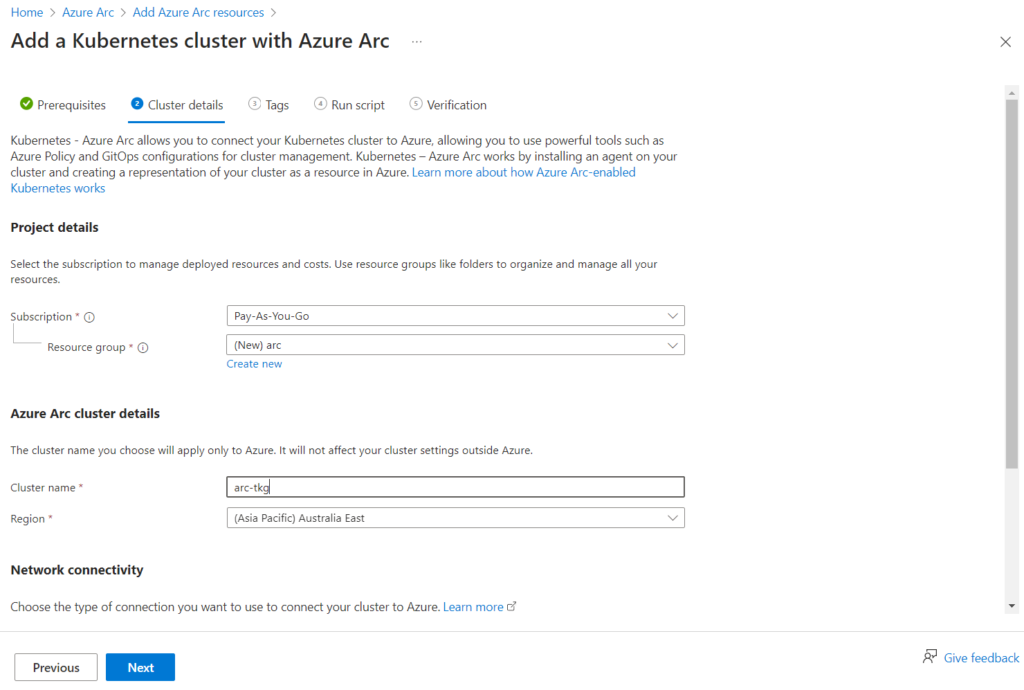

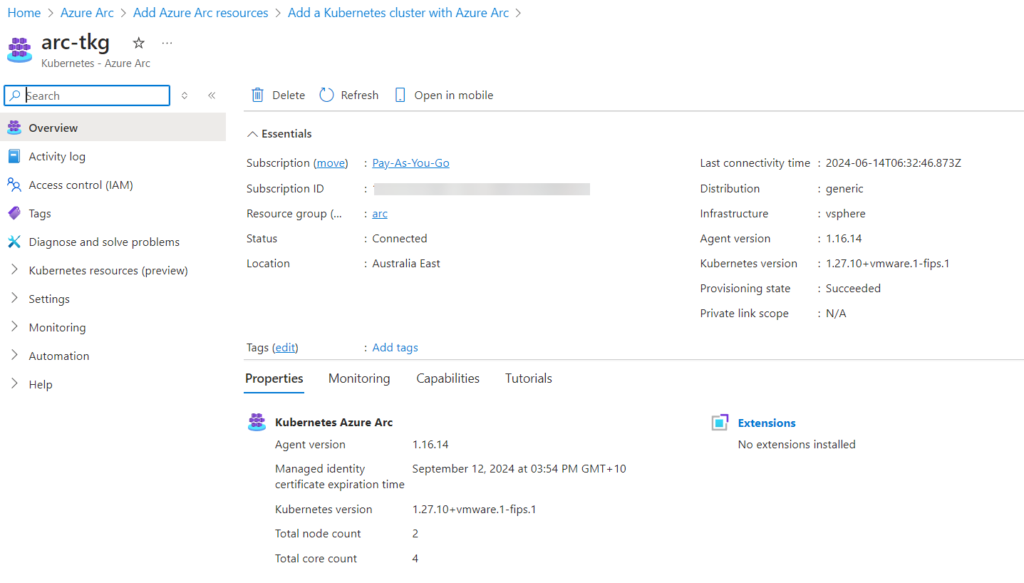

Select or create a new Resource group. I created a new one called arc. Then define a cluster name. I used arc-tkg and selected an available Region. You’ll also need to select a Network connectivity method. I used Public endpoint. Click Next.

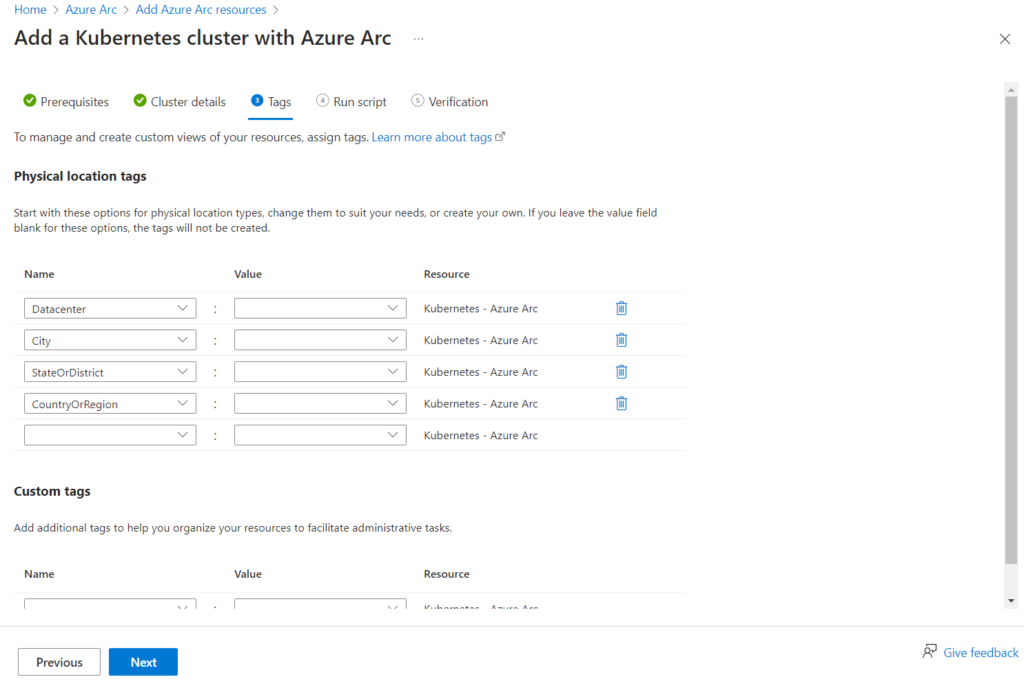

Here we can define some tags. The defaults are fine for now. Click Next

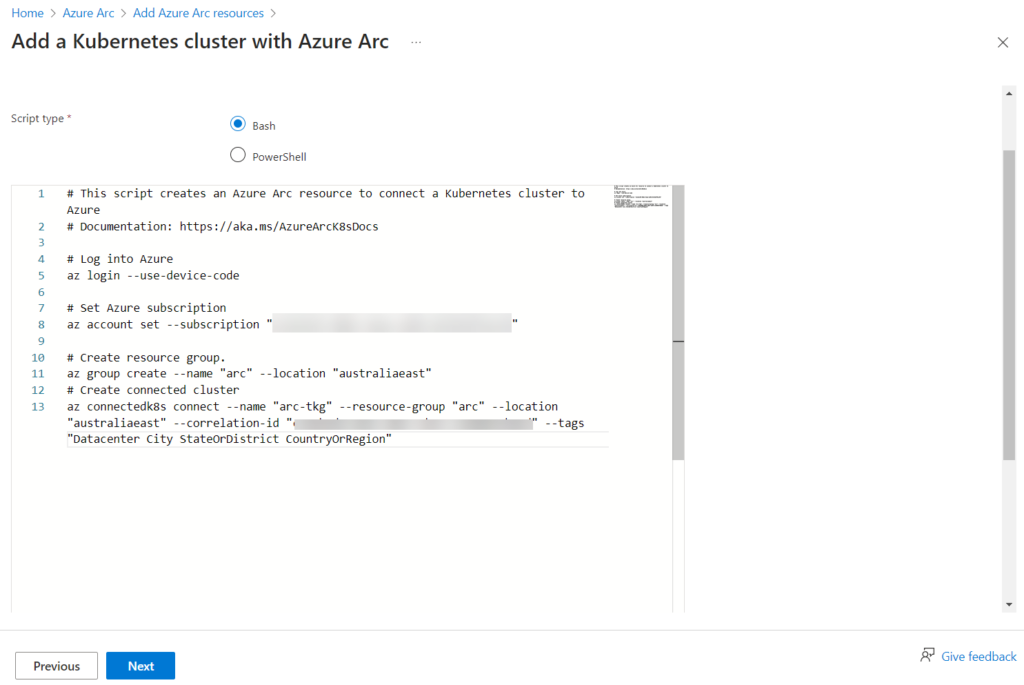

We are now presented with a bunch of commands to run that will deploy, configure, integrate, or whatever it is, from our VMware TKG cluster to Azure Arc.

Leave this page open but don’t click Next just right now. We will return after we complete the installation from the CLI. Now jump back over to your CLI as we will run these commands presented along with a few others. Make sure you’re still logged into your VMware TKG cluster, and you have your correct context set. The Azure Arc process will use your context details for the integration.

Run the first three commands to log into Azure, set your subscription, and create resource group. Don’t run the last command just yet!

az login --use-device-code

az account set --subscription "11111111-1111-1111-1111-111111111111"

az group create --name "arc" --location "australiaeast"

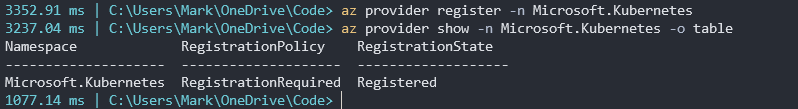

The next following commands may or may not need to be run depending on whether you have done some of this before. But we need to register a few providers if not already done. Wait a few minutes for each of these providers to register after you run them. You can use the show parameter to confirm they are all registered.

az provider register -n Microsoft.Kubernetes

az provider register -n Microsoft.KubernetesConfiguration

az provider register -n Microsoft.ExtendedLocation

az provider show -n Microsoft.Kubernetes -o table

az provider show -n Microsoft.KubernetesConfiguration -o table

az provider show -n Microsoft.ExtendedLocation -o table

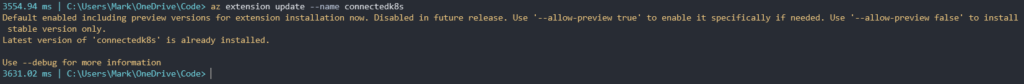

The final thing before we attempt to setup the integration is just to make sure we have the connectdk8s Azure CLI extension installed with the latest version.

You can install with either

az extension add --name connectedk8s

or attempt to update with

az extension add --name connectedk8s

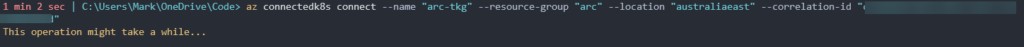

Ok, now finally we are ready to start the integration between our VMware TKG cluster and Azure Arc using that last az command.

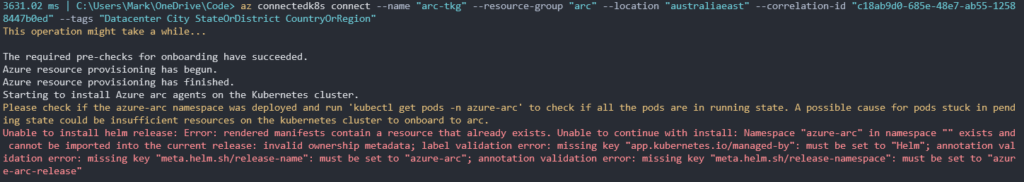

az connectedk8s connect --name "arc-tkg" --resource-group "arc" --location "australiaeast" --correlation-id "1111111-1111-1111-1111-111111111111"

There are still a few crafty tricks we need to do in our VMware TKG cluster for the integration to succeed. Immediately after we run the az connectedk8s connect command. We need to jump back into our TKG cluster and create some Pod Security Admission labels. I mentioned this right at the top of this post. Unfortunately these labels can only be created after its respective namespace is created in Kubernetes via the AZ CLI command. Furthermore, if you attempt to create these namespaces and labels beforehand in Kubernetes the Azure Arc integration will fail due to these resources already existing. Frustrating I know!

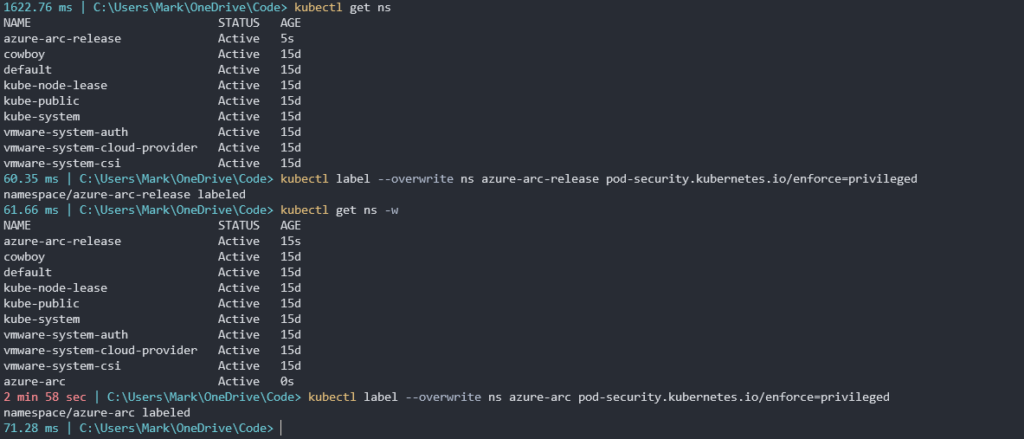

Timing is critical here!!! Type in kubectl get ns. You should see a new namespace called azure-arc-release. This is an ephemeral namespace created for Arc validation checks. As soon as you see it, create a PSA label.

kubectl label --overwrite ns azure-arc-release pod-security.kubernetes.io/enforce=privileged

Next run the command kubectl get ns -w. Wait for the azure-arc namespace to be created. It will take a few minutes to appear. When it does appear, create another PSA label for this namespace.

kubectl label --overwrite ns azure-arc pod-security.kubernetes.io/enforce=privileged

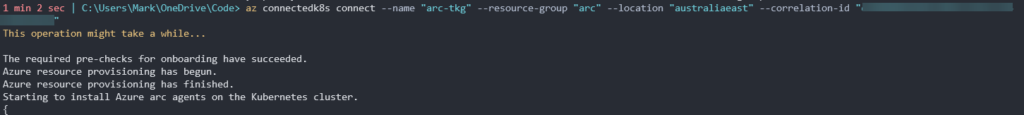

We can now jump back over to our az connectedk8s connect command and it should be proceeding to install as normal.

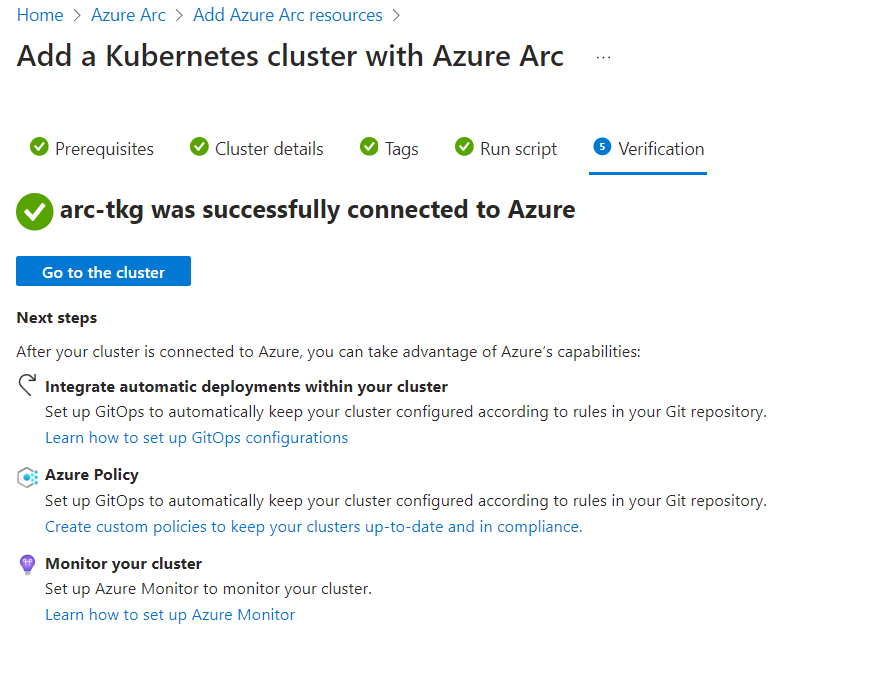

Once completed we can navigate back to where we left our browser windows and click Next to bring us to the last step to verify that our cluster was successfully connected to Azure.

Now we’re almost done but not quite. I know, I know, but we’re almost there, I promise. There’s one more step to give Arc some required permissions into our TKG cluster. Click Go to the cluster.

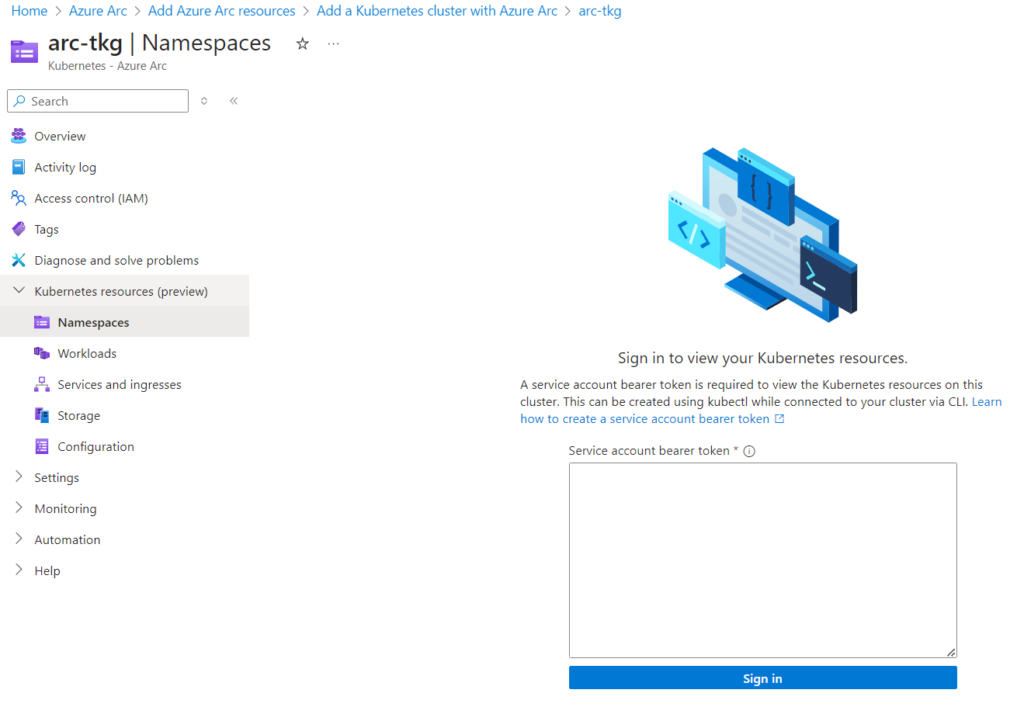

Now select Kubernetes resources.

Here we need to input in a Service account bearer token to allow Arc permission into our TKG cluster resources. Microsoft provides a link to a page to help us with the commands if we need it. Basically we need to create a service account and a cluster role binding for it.

Head back over to our TKG Cluster and run the following commands.

kubectl create serviceaccount arc-user -n default

kubectl create clusterrolebinding arc-user-binding --clusterrole cluster-admin --serviceaccount default:arc-user

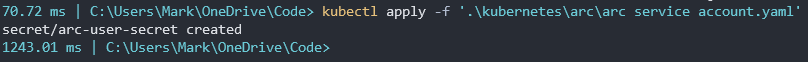

Create the following yaml file and apply to create a secret for this new service account.

apiVersion: v1

kind: Secret

metadata:

name: arc-user-secret

annotations:

kubernetes.io/service-account.name: arc-user

type: kubernetes.io/service-account-token

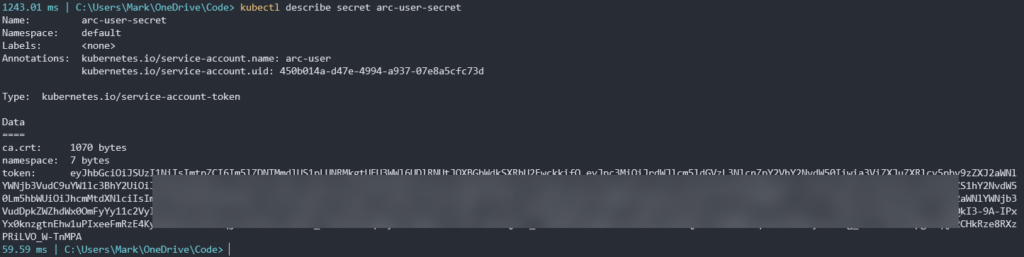

Type in kubectl describe secret arc-user-secret to display the token.

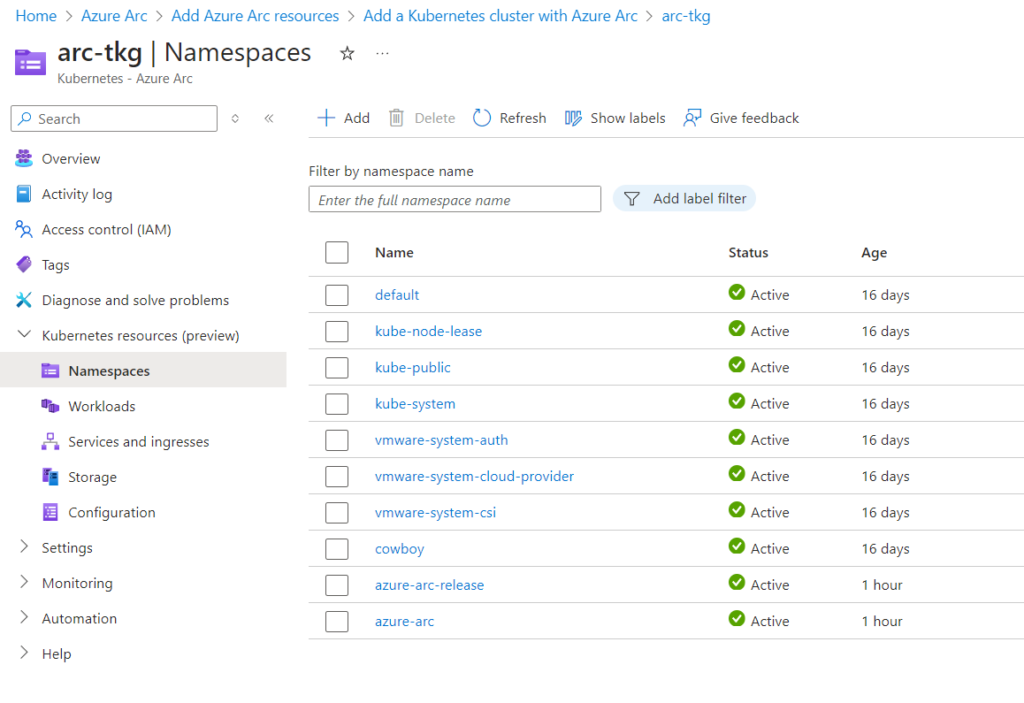

Copy the token and paste it back in the Arc browser window and click Sign in. If successful, it should now display the resources inside our VMware TKG cluster, along with things like our Pods, Services, and config maps.

Hooray, you made it! Nice work. Go get a coffee.

In summary the process to integrating a VMware TKG cluster into Azure Arc is not that bad. The most frustrating part is dealing with Pod Security Admission in TKG v1.25+ clusters. I’m still holding hopes that there is a way to turn this on at the cluster level but haven’t found a solution yet. With a little more research, I’m sure that you can modify the Arc installation scripts to perform these steps too. For now, though, it does the job and I’ve been able to consistently perform this integration successfully.

If I’m honest as well, noting I’m a VMware employee, this does appear to be a direct competitor to VMware Tanzu Mission Control (TMC). Having also used that service offering. Arc does feel much less refined and basic. TMC’s capabilities and installation process does feel more straight forward to integrating a conformant Kubernetes cluster. It also has the capability to being installed and run on-prem which is still very appealing to many customers.