Openfiler

-Follow the start of my Virtual Storage Appliance experiment with Part 1.

First up in my VSA experiment is Openfiler. An Open Source SAN/NAS solution. Openfiler comes with a raft of features. For a home test lab it’s more than you could possibly ask for. It will do both Block and file back based storage. CIFS, NFS, RAID, snapshotting, the list goes on. For my test lab purposes I focused on the Block based iSCSI features. Openfiler is avaliable as an Open Source edition or with commercial support. The latter providing advanced features, such as, High Availability, replication, and Fibre Channel target.

The first objective was to install it. The download comes as an ISO file. Installation was very simple with straight forward installation instructions supplied to perform either a text based or GUI install.

After a few simple clicks the installation was complete. It is about as close as an Appliance install as you can get without being an Appliance.

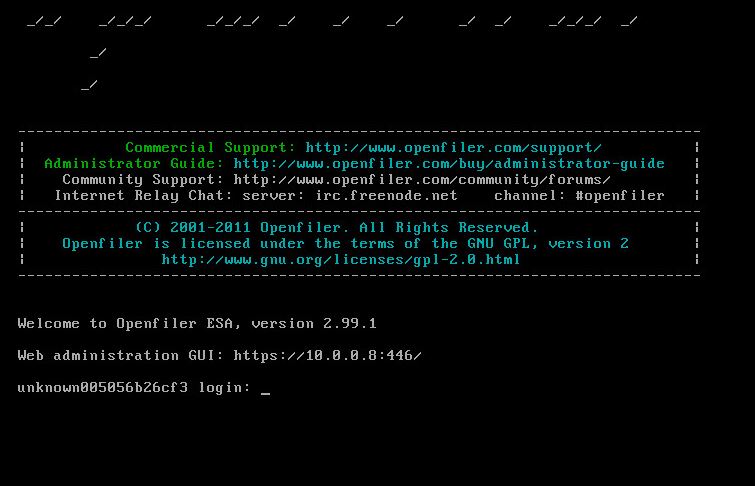

Once installed and booted you are taking to a console prompt. All administration is done via web GUI. Both the admin GUI and user GUI are accessed through the same URL. The admin GUI is accessed using the root account while the user configuration is accessed via the Openfiler account.

Instructions were non-existant on the non-commercial side with access to just the community forums. For the SAN uninitiated, configuration would no doubt be a challenge.

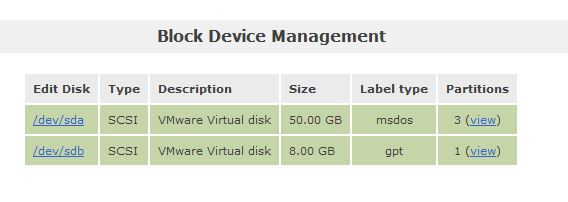

I installed Openfiler on a 50 GB VM drive within ESXi. I was immediately faced with an issue trying to create a usable volume. At midnight on a work day and with no instructions I might have been asking for a bit much of myself. When the words started floating on the screen I decided to called it a night and came back to it the next day. The following day faced with the same issues, unable to create a volume, I started trawling through the forums.

A few posts pointed to the partition type of the disk being msdos and suggested trying to the modify the disk to gpt. Instead I added a new virtual disk which immediately detected as gpt. That allowed me to edit the disk and partition out the disk.

In typical Linux fashion it was based around start and ending cylinder numbers. Again not that intuitive to non-Linux admins. Once a partition was created, volumes needed to be created. The process became a little easier at this point. Volumes could be created in MB with a dropdown menu to select the filesystem type. As I wanted iSCSI I selected block.

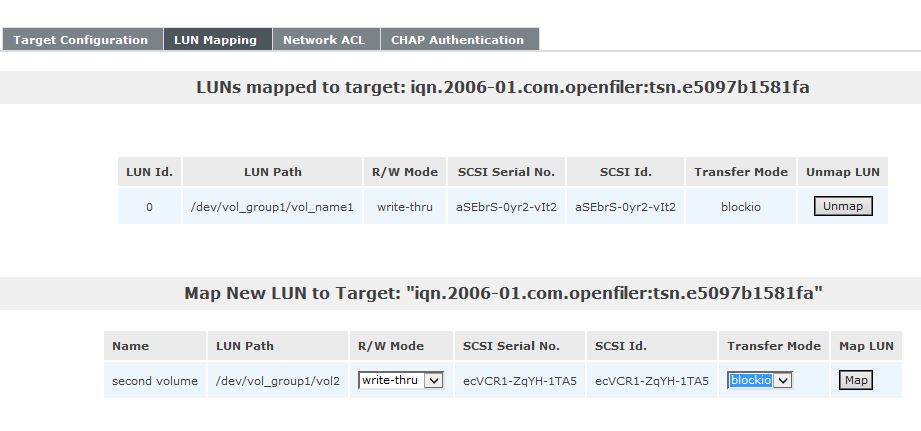

While exploring the tab menus after installation in the GUI interface I noticed that most services were disabled, including iSCSI. So I changed it to Enabled and click start. Back over to the Volume tab I went to iSCSI targets. I could see no iSCSI targets but a button to add one, so I clicked it. Next under LUN mappings I could see the volumes that were previously created. On each volume I click Map.

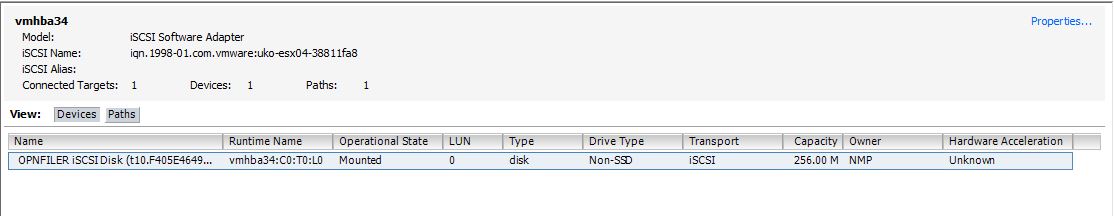

At this point using my previous knowledge of SANs I felt I had done everything I needed to now present that storage to a device. In my case an ESXi host. I had already preconfigured iSCSI on my ESXI host. I had a software adapter added and bindings all setup. I added the Openfile IP as an Target and then performed a Rescan.

Once the scan completed my disk appeared. While still in ESXi I went over to Storage and attempted to add in a new Datastore. The disks I created weren’t appearing and now after midnight again, I was ready to throw in the towel. After a short think about what was going on. I knew that a VMFS datastore did require a small amount of storage overhead. So I created a new volume in Openfiler which was a bit more realistic in size at 2 GB (rather than piddly 256 MB I originally did). This time the disk appeared and I was able to create a Datastore. As expected, close to 800 MB was gone after formatting and VMDK overhead.

Putting aside the final process of adding a disk into ESXi. The whole process of installing and configuring Openfiler went relatively smooth. It did require a little bit of troubleshooting. Not having access to official documentation and only community forums didn’t help. The community is great but as with any community they are quick to lose interest when a solution isn’t straight forward. Having a good working knowledge of SANs and iSCSI went a long way. There are some features worth further investigation, namely, Snapshots, LDAP authentication, and NFS. Some initial testing of snapshots haven’t work so no doubt it will require more time on the forums.

I did later come across a site that specialises in Virtualization solutions with some a installation and configuration documentation for Openfiler and ESXi. I’ve provided a link the the PDF below. It’s actually quite a good step by step document.

References

0 thoughts on “The Virtual Storage Appliance experiment – Part 2”